By clicking “Accept,” you agree to the use of cookies and similar technologies on your device as set forth in our Cookie Policy and our Privacy Policy. Please note that certain cookies are essential for this website to function properly and do not require user consent to be deployed.

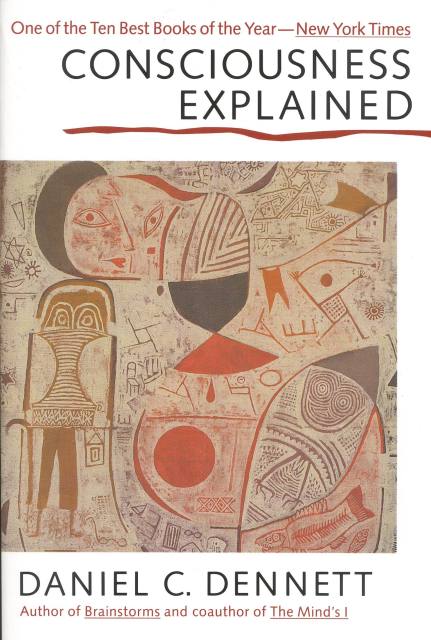

Consciousness Explained

Contributors

Formats and Prices

- On Sale

- Oct 20, 1992

- Page Count

- 528 pages

- Publisher

- Back Bay Books

- ISBN-13

- 9780316180665

Price

$23.99Price

$31.99 CADFormat

Format:

Trade Paperback $23.99 $31.99 CADThis item is a preorder. Your payment method will be charged immediately, and the product is expected to ship on or around October 20, 1992. This date is subject to change due to shipping delays beyond our control.

Buy from Other Retailers:

Daniel Dennett’s “brilliant” exploration of human consciousness — named one of the ten best books of the year by the New York Times — is a masterpiece beloved by both scientific experts and general readers (New York Times Book Review).

Consciousness Explained is a full-scale exploration of human consciousness. In this landmark book, Daniel Dennett refutes the traditional, commonsense theory of consciousness and presents a new model, based on a wealth of information from the fields of neuroscience, psychology, and artificial intelligence. Our current theories about conscious life — of people, animal, even robots — are transformed by the new perspectives found in this book.

“Dennett is a witty and gifted scientific raconteur, and the book is full of fascinating information about humans, animals, and machines. The result is highly digestible and a useful tour of the field.” —Wall Street Journal

Consciousness Explained is a full-scale exploration of human consciousness. In this landmark book, Daniel Dennett refutes the traditional, commonsense theory of consciousness and presents a new model, based on a wealth of information from the fields of neuroscience, psychology, and artificial intelligence. Our current theories about conscious life — of people, animal, even robots — are transformed by the new perspectives found in this book.

“Dennett is a witty and gifted scientific raconteur, and the book is full of fascinating information about humans, animals, and machines. The result is highly digestible and a useful tour of the field.” —Wall Street Journal