Promotion

Use code SPRING26 for 20% off sitewide.

By clicking “Accept,” you agree to the use of cookies and similar technologies on your device as set forth in our Cookie Policy and our Privacy Policy. Please note that certain cookies are essential for this website to function properly and do not require user consent to be deployed.

We Know It When We See It

What the Neurobiology of Vision Tells Us About How We Think

Contributors

Formats and Prices

- On Sale

- Mar 10, 2020

- Page Count

- 272 pages

- Publisher

- Basic Books

- ISBN-13

- 9781541618497

Price

$17.99Price

$22.99 CADFormat

Format:

- ebook $17.99 $22.99 CAD

- Hardcover $28.00 $35.00 CAD

- Audiobook Download (Unabridged)

This item is a preorder. Your payment method will be charged immediately, and the product is expected to ship on or around March 10, 2020. This date is subject to change due to shipping delays beyond our control.

Buy from Other Retailers:

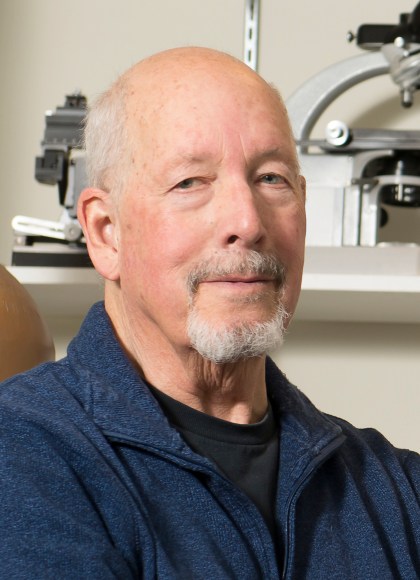

A Harvard researcher investigates the human eye in this insightful account of what vision reveals about intelligence, learning, and the greatest mysteries of neuroscience.

Spotting a face in a crowd is so easy, you take it for granted. But how you do it is one of science’s great mysteries. And vision is involved with so much of everything your brain does. Explaining how it works reveals more than just how you see. In We Know It When We See It, Harvard neuroscientist Richard Masland tackles vital questions about how the brain processes information — how it perceives, learns, and remembers — through a careful study of the inner life of the eye.

Covering everything from what happens when light hits your retina, to the increasingly sophisticated nerve nets that turn that light into knowledge, to what a computer algorithm must be able to do before it can be called truly “intelligent,” We Know It When We See It is a profound yet approachable investigation into how our bodies make sense of the world.