Promotion

By clicking “Accept,” you agree to the use of cookies and similar technologies on your device as set forth in our Cookie Policy and our Privacy Policy. Please note that certain cookies are essential for this website to function properly and do not require user consent to be deployed.

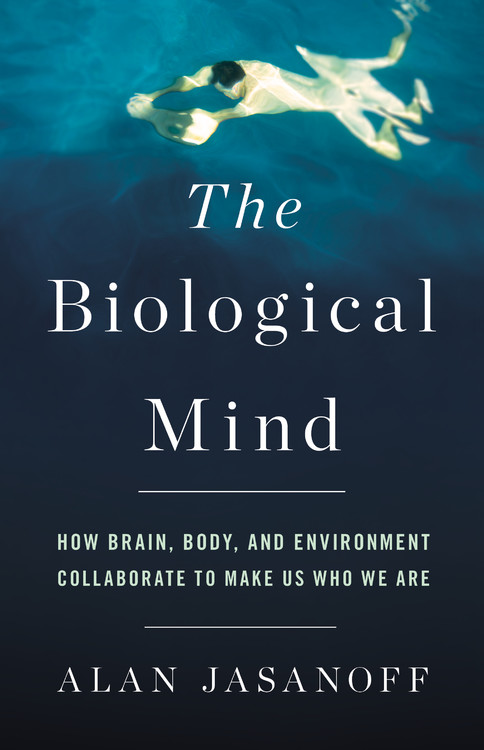

The Biological Mind

How Brain, Body, and Environment Collaborate to Make Us Who We Are

Contributors

Formats and Prices

- On Sale

- Mar 13, 2018

- Page Count

- 304 pages

- Publisher

- Basic Books

- ISBN-13

- 9780465052684

Price

$37.00Price

$47.00 CADFormat

Format:

- Hardcover $37.00 $47.00 CAD

- ebook $19.99 $25.99 CAD

- Audiobook Download (Unabridged) $27.99

This item is a preorder. Your payment method will be charged immediately, and the product is expected to ship on or around March 13, 2018. This date is subject to change due to shipping delays beyond our control.

Buy from Other Retailers:

To many, the brain is the seat of personal identity and autonomy. But the way we talk about the brain is often rooted more in mystical conceptions of the soul than in scientific fact. This blinds us to the physical realities of mental function. We ignore bodily influences on our psychology, from chemicals in the blood to bacteria in the gut, and overlook the ways that the environment affects our behavior, via factors varying from subconscious sights and sounds to the weather. As a result, we alternately overestimate our capacity for free will or equate brains to inorganic machines like computers. But a brain is neither a soul nor an electrical network: it is a bodily organ, and it cannot be separated from its surroundings. Our selves aren’t just inside our heads — they’re spread throughout our bodies and beyond. Only once we come to terms with this can we grasp the true nature of our humanity.

-

"This philosophical puzzle has been posed, in various forms, for centuries and is one of the starting points for Alan Jasanoff's elegant and spirited attack on what he calls our 'cerebral mystique' ... A lucid primer on current brain science that takes the form of a passionate warning about its limitations."Wall Street Journal

-

"The Biological Mind is chock-full of fun facts that entertain. And best of all, it makes you think. I found myself debating with Jasanoff in my head as I read -- surely a sign of a worthy book."New York Times Book Review

-

"Alan Jasanoff's The Biological Mind...stylishly sums up the state of current knowledge while emphasizing the limitations of neuroscientific understanding."Wall Street Journal

-

"In this powerful treatise, neurological engineer Alan Jasanoff issues a corrective to the 'cerebral mystique.'"Nature

-

"The book features a learned and experienced author who has the ability to take complex concepts of neuroanatomy and neurophysiology and explain them in easy to understand descriptions. The intelligent reader interested in 21st century understanding of the human brain and particularly those who may be involved in mental or physical health will find this book useful and interesting."The New York Journal of Books

-

"[Jasanoff's] clear, lively writing reveals how our emotions, such as the fight-or-flight response and the suite of thoughts and actions associated with stress, provide strong evidence for a brain-body connection."Science News

-

"Taking the brain off of its pedestal, Jasanoff offers an exhaustive, comprehensible, and at times playful (e.g., why do humans now study brains instead of eat them?) look at the brain. Appropriate for both neuroscientists as well as general readers interested in gaining a better understanding of this vital organ."Library Journal

-

"Jasanoff writes with admirable clarity as he argues that the modern tendency of neuroscience to take a 'brain-centered view' that overlooks external sources of behavior can lead to epistemological dead ends."Kirkus Reviews

-

"Jasonoff delivers a highly readable and enjoyable exploration of a series of compelling questions relating to the human experience."CHOICE

-

"Neuroscientist Alan Jasanoff has identified a widespread 'Brain Mystique'--a collection of folk theories about the brain that are scientifically false. In The Biological Mind, Jasanoff dispels these theories while leading the reader on an engaging tour of real neuroscience, from the brain to the body to the social and physical world."George Lakoff, coauthor of The Neural Mind

-

"Any book that opens with a historical account of the nutritional merits of eating animal brains and concludes with an imaginary account of the author's brain being removed from his body to take up residence in a vat is certainly worth a read, and Alan Jasanoff's The Biological Mind is precisely that. Thought-provoking and enjoyable, this book will provide readers with a new conception of who they are."Robert Whitaker, authorof Anatomy of an Epidemic

-

"The dark side of all the wonderful new neurotechnology at researchers' fingertips is that too many experts are now over-simplifying mental illness, reducing it to mere descriptions of brain physiology. Alan Jasanoff does an outstanding job of bringing much needed nuance, humanity, and compassion to the way we think about mental illness and the brain."Sally Satel, M.D., Lecturer in Psychiatry at the Yale University School of Medicine

-

"Alan Jasanoff's The Biological Mind provides a provocative and accessible neuroscientific defense of the 'extended mind' thesis--the idea that we are much more than our brains, and even the bodies in which they are normally housed. By the conclusion, readers will be left wondering whether Jasanoff's findings suggest something even more radical: that our brains are actually platforms for launching any number of versions of who we really are."Steve Fuller, Auguste Comte Chair in Social Epistemology at the University of Warwick and author of Humanity 2.0